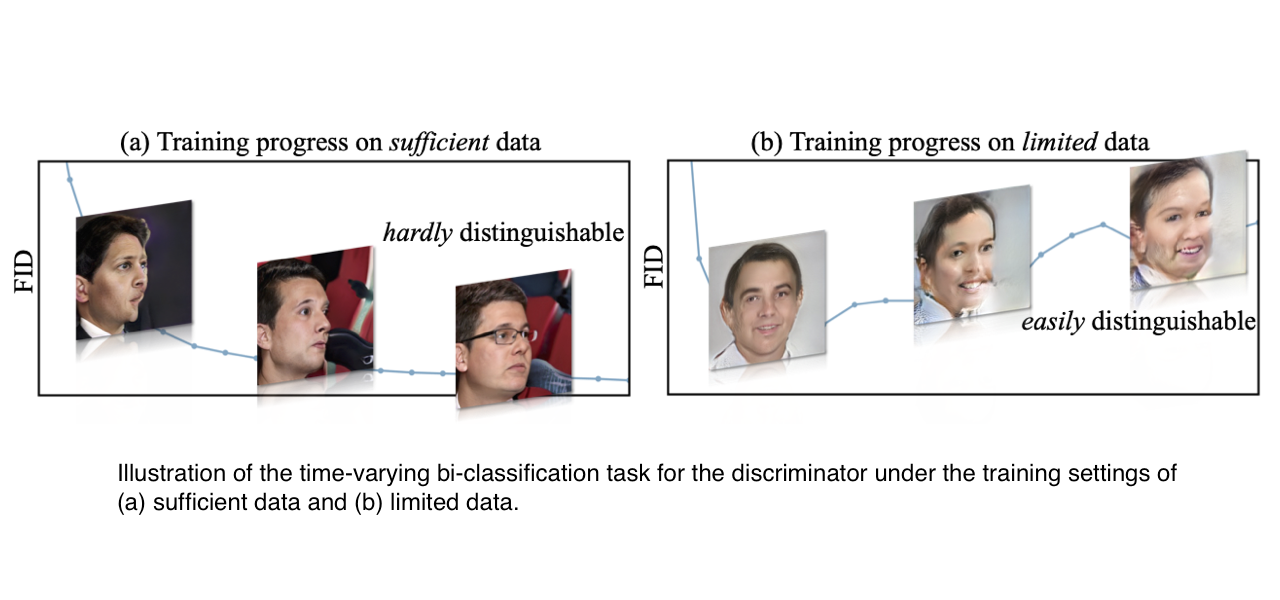

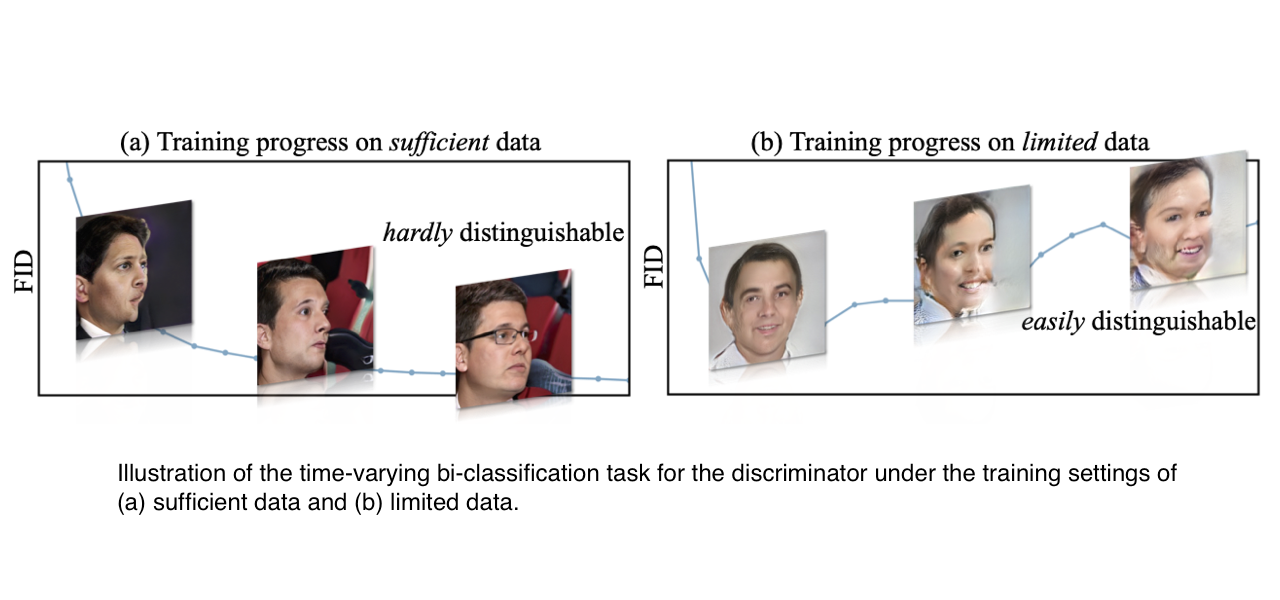

In DynamicD, we argue that the training-time synthesis distribution of GANs keeps varying,

and thus effects the bi-classification task for the discriminator, which further leads to various notorious issues in training GANs.

We thus propose an on-the-fly adjustment on the discriminator's capacity that can better accommodate such a time-varying task. The proposed training strategy,

termed as DynamicD, is general and effective across different synthesis tasks and dataset scales, without incurring any additional computation cost or training objectives.

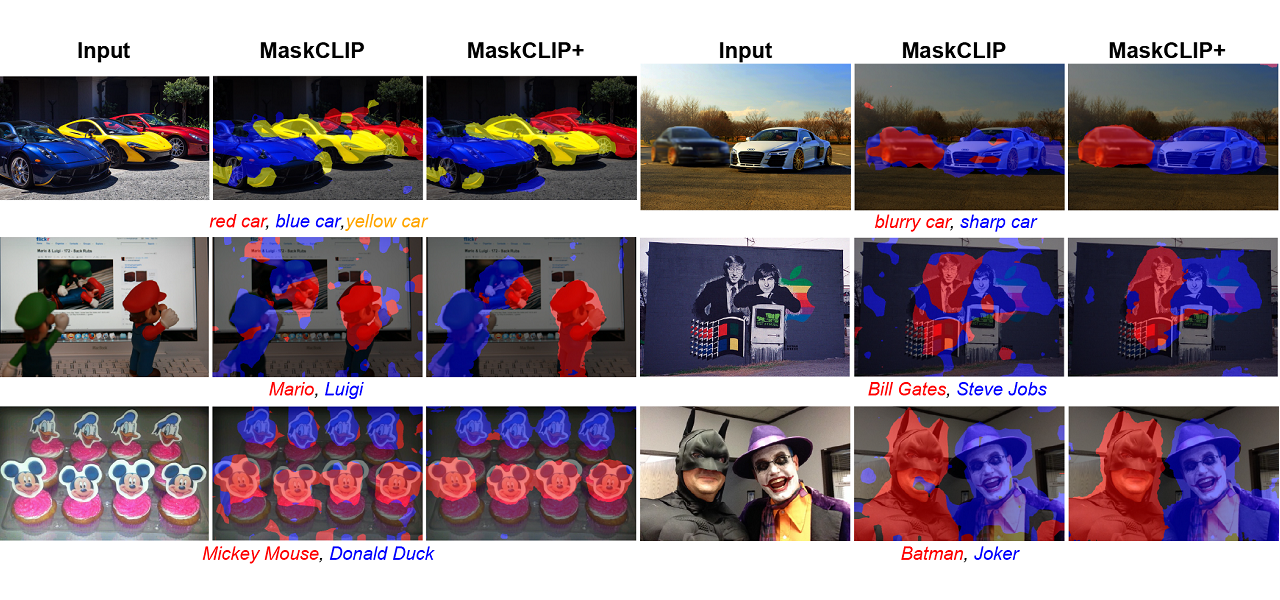

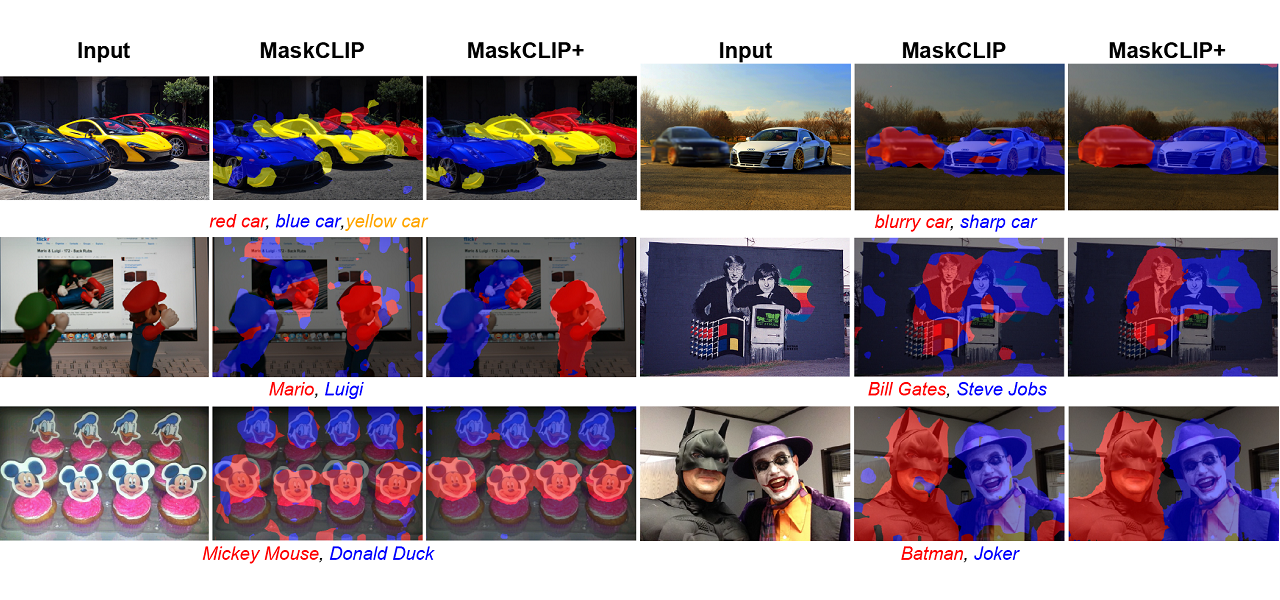

In MaskCLIP, we present the pioneering study that examines the intrinsic potential of CLIP

for pixel-level dense prediction, specifically in semantic segmentation. With minimal additional designs, MaskCLIP is able to improve

SOTA transductive zero-shot semantic segmentation methods significantly (from 35.6/20.7/30.3 to 86.1/66.7/54.7). Our study also reveals

the robustness of MaskCLIP and its capability in discriminating fine-grained objects and novel concepts (e.g. Joker and Batman).

In BungeeNeRF, we extend NeRF to large-scale scenes whose views are observed

at drastically different scales, such as city scenes, with views ranging from satellite level to ground level imagery. Such extreme scale variance

poses great challenges to existing NeRF variants. BungeeNeRF follows a progressive growing paradigm to effectively activates high-frequency PE channels

and successively unfolds more complex details as the training proceeds, resulting in high-quality rendering in different levels of detail.

In TIE, we introduce a Transformer-based network for particle-based simulation that

can capture the rich semantics of particle interactions in an edge-free manner, so that TIE is combined with the merits of GNN-based

approaches without suffering from their significant computational overhead. TIE is further amended with learnable material-specific

abstract particles to disentangle global material-wise semantics, which help TIE achieve superior generalization across various simulation domains.

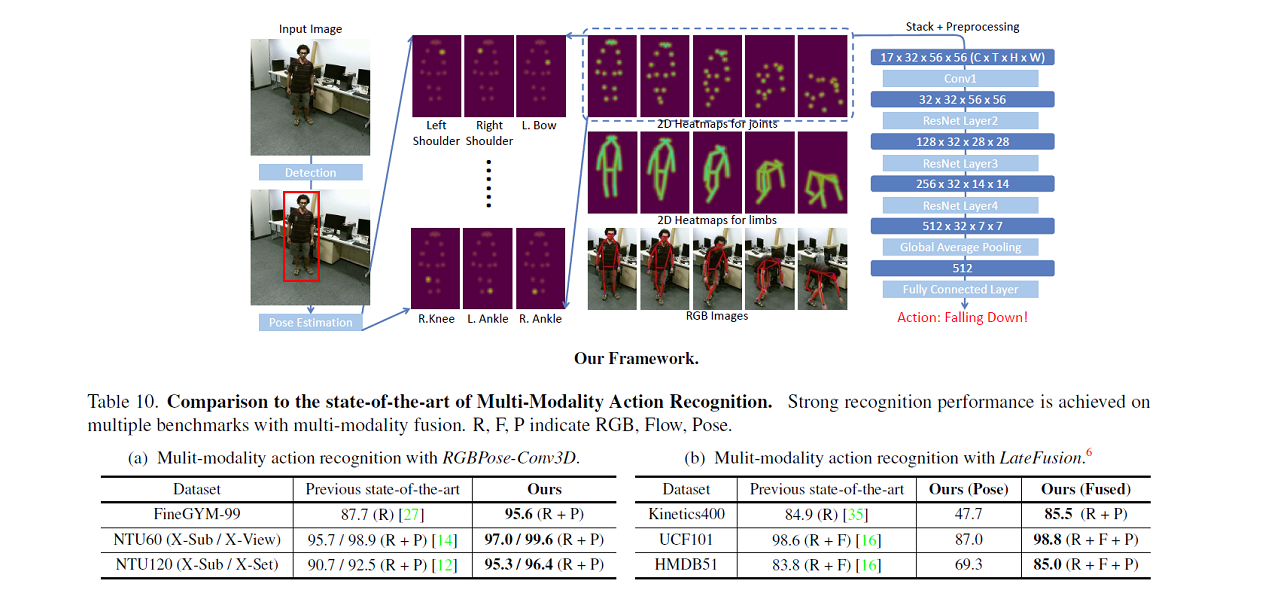

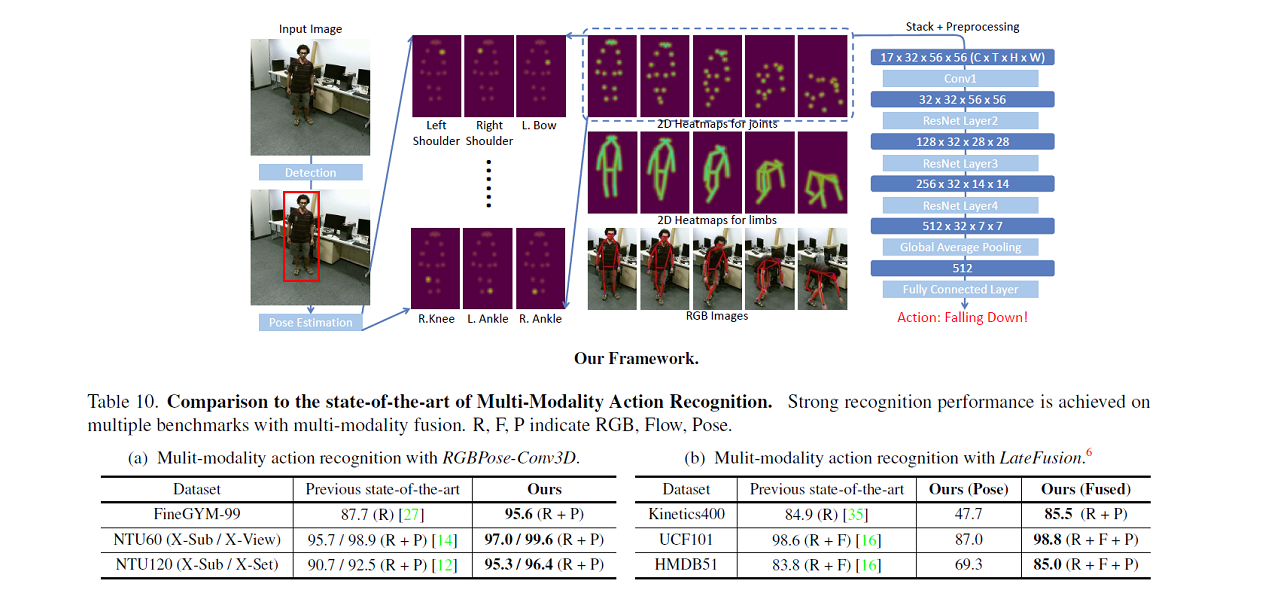

In PoseConv3D, we propose a new approach to skeleton-based action recognition that avoids

the limitations of GCN-based methods in terms of robustness, interoperability, and scalability. PoseConv3D relies on CNN-based architectures as the backbone,

taking a 3D heatmap volume instead of a graph sequence as the input representation of human skeletons. In this way PoseConv3D can be easily

integrated with other modalities at early fusion stages, providing a great design space to boost the performance.

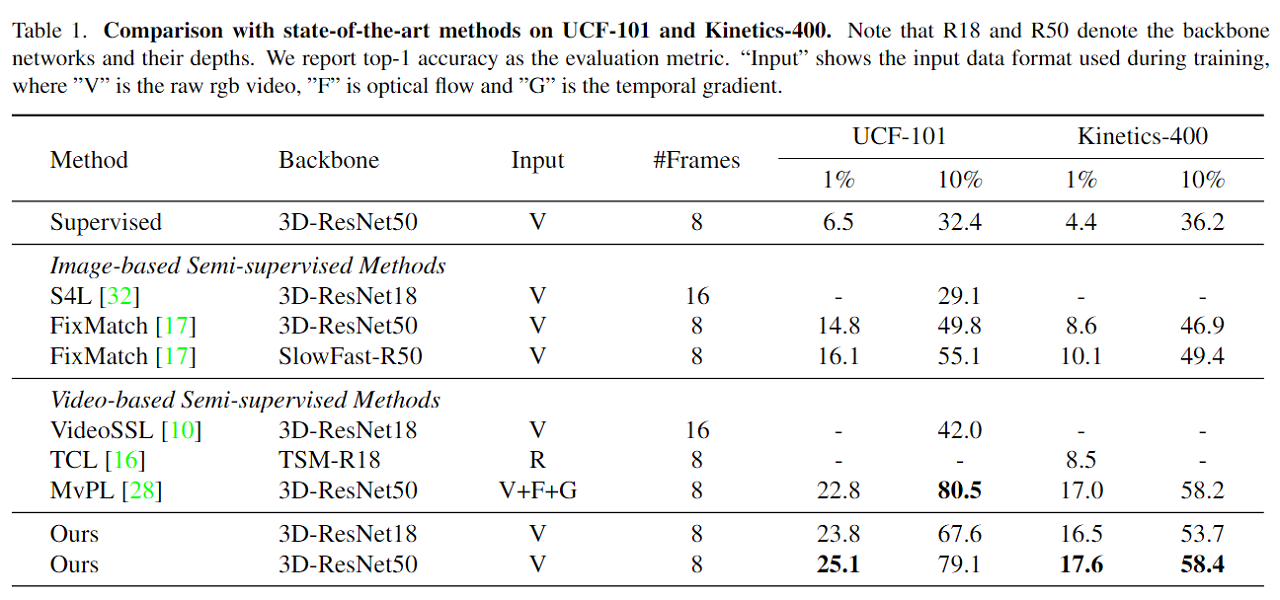

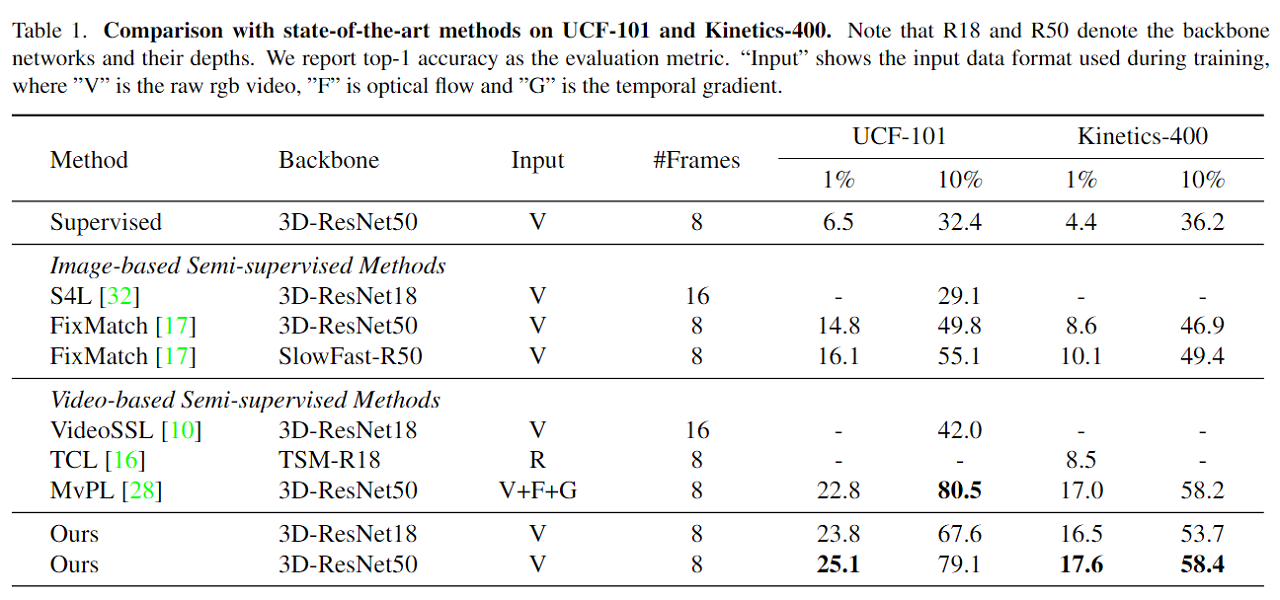

In CMPL, we propose a more effective pseudo-labeling scheme for semi-supervised

action recognition, called Cross-Model Pseudo-Labeling (CMPL). Concretely, we introduce a lightweight auxiliary network in addition to the primary backbone,

and ask them to predict pseudo-labels for each other. We observe that, due to their different structural biases, these two models tend to

learn complementary representations, and subsequently benefit each other by utilizing cross-model predictions as supervision.